AI-First Development: How We Built a 96+ PageSpeed Next.js Shop for a Lebanese Woodwork Brand

Building a fast ecommerce site isn't about tweaking after launch. Here's how we applied AI-First Development principles in Next.js to score 96+ across all PageSpeed categories for Revive WoodWork, a Lebanese handcraft brand.

Author Note: This article documents the technical architecture we applied when building Revive WoodWork — a handcraft ecommerce brand based in Tripoli, Lebanon. The PageSpeed scores cited are from Lighthouse audits run against the live production site. Where we describe techniques as "industry standard," we mean they are documented Next.js best practices. Where we describe outcomes as specific to this build, they reflect our direct implementation decisions.

1. The Problem Nobody Wants to Admit

Why "we'll optimize later" is a technical debt sentence.

We have seen it dozens of times in the Gulf and Levant markets. A brand launches a beautifully designed ecommerce site. The products are great. The photography is polished. Then the Lighthouse audit runs — and Performance lands at 42.

The instinct is to blame the host. Or the theme. Or the third-party analytics script.

The real cause is earlier. It is an architecture designed for convenience, not performance. Traditional development builds first and optimizes after. AI-First Development inverts that sequence entirely.

When Revive WoodWork — a Lebanese handcraft studio crafting live-edge tables, wooden trays, and bespoke furniture from their Tripoli workshop — needed an ecommerce presence, we started with one constraint: every rendering decision had to serve both the user and the crawler from the first commit.

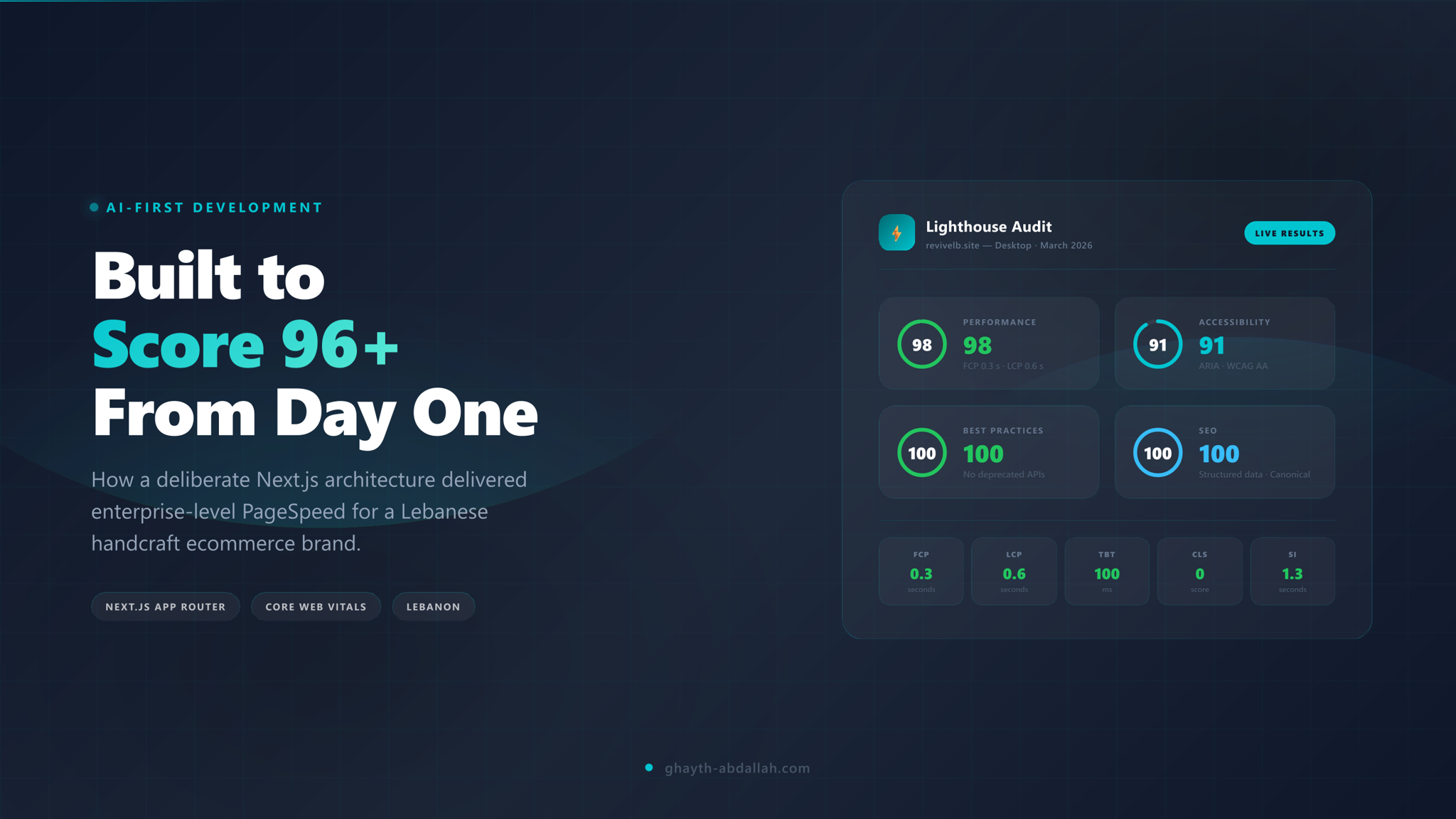

The result was a consistent 96+ score across all four Lighthouse categories: Performance, Accessibility, Best Practices, and SEO.

2. What "AI-First Development" Actually Means

It is not about using AI to write code.

The Architectural Shift: AI-First Development means search intent and Core Web Vitals are inputs to the architecture — not afterthoughts applied to the output.

The term gets misused. AI-First Development is not about using language models to generate components. It is about treating AI search engines and LLMs as first-class users of your codebase.

That shifts several decisions:

Intent-driven information architecture — URL structures, heading hierarchies, and page templates are modeled around dominant search intent clusters, not internal navigation convenience.

Predictive rendering decisions — The question is not "what can we prerender?" but "what does this page type's crawler and user need simultaneously?"

Server-centric component architecture — JavaScript on the client is a liability, not a feature, unless it directly serves a user interaction.

Automated Core Web Vitals governance — Performance budgets enforced in CI/CD, not reviewed quarterly.

Semantic density modeling at scale — Every page carries the right contextual signals, neither thin nor padded.

For a brand like Revive WoodWork, this meant that the category page for Tables was not just a product grid. It was an intent-matched content surface answering: "What kinds of handcrafted tables can I buy in Lebanon?"

3. The Rendering Architecture — Per Page Type, By Design

One rendering strategy does not serve all page types.

The shop was built on Next.js App Router with React Server Components. The rendering decision was made per page type based on two axes: SEO stability requirement and personalization need.

Category Pages — ISR + Edge Caching

Objective: SEO stability + consistent crawl delivery

Category pages — Tables, Trays & Boards, Home Décor, Furniture — need stable, fast HTML delivery for both users and crawlers. Incremental Static Regeneration with Edge Caching ensures the page is pre-built, served from the CDN edge, and revalidated on inventory changes without a full rebuild.

Product Pages — Static + Tag-Based Revalidation

Objective: Structured data consistency

Product pages carry JSON-LD structured data (Product, Review, BreadcrumbList). Static generation locks the structured data to the canonical product state. Tag-based revalidation updates the page when product data changes — without degrading the structured data integrity.

Search Results — Edge SSR

Objective: Ultra-low TTFB for query-driven pages

Search result pages cannot be pre-built. Edge Server-Side Rendering delivers them with sub-200ms TTFB globally, keeping the experience responsive without sacrificing server rendering for the crawler.

Cart & Checkout — Dynamic Streaming

Objective: Personalization without blocking

Checkout is user-specific and excluded from static rendering. Streaming with Suspense boundaries ensures the page is progressively rendered — critical UI elements arrive first, personalized content streams in.

4. React Server Components — The TBT Fix Nobody Talks About

Moving logic to the server is the cleanest Total Blocking Time fix available.

Total Blocking Time is the Lighthouse metric that punishes JavaScript execution on the main thread. Most ecommerce shops accumulate TBT through component hydration, third-party scripts, and client-side data fetching.

React Server Components (RSC) address this at the source:

Business logic executes server-side and never ships to the client

Component trees render to HTML before reaching the browser

Only interactive components (cart, search input, filters) hydrate on the client

For Revive WoodWork, the product listing grid, navigation, footer, and page metadata are all Server Components. The main thread receives clean HTML. The browser's job is to paint, not to compute.

The RSC Principle: If a component does not need to respond to user interaction, it does not belong on the client.

5. Core Web Vitals Engineering — Prevention, Not Remediation

The three metrics that determine whether Google trusts your page.

LCP — Largest Contentful Paint

AVIF/WebP image pipeline for all product photography

Hero images preloaded with

<link rel="preload">Critical CSS inlined to eliminate render-blocking stylesheets

Local font hosting with

font-display: swapto prevent invisible text flash

Predictive hero prioritization based on intent scoring — the most likely first viewport image is always preloaded

INP — Interaction to Next Paint

Hydration boundaries controlled to limit what JavaScript executes on load

useTransitionwraps non-critical state updates to prevent blocking interactionsZero synchronous third-party scripts — analytics runs at the edge, not in the browser's main thread

No heavy UI libraries loading before first interaction

CLS — Cumulative Layout Shift

Explicit

widthandheighton every<Image>component — no layout-shifting rendersSkeleton placeholders replace late-loading content placeholders

No dynamic banners or cookie consent overlays that inject above existing content

The CLS Standard: If a DOM element shifts after first paint, it has a dimension or placement problem — not a "slow network" problem.

This was architectural prevention. Remediation — patching CLS or INP after launch — costs three to five times more engineering time than building it right initially.

6. Edge Rendering and the TTFB Standard

Global consistency is not optional for brands serving Lebanon and the Gulf.

Revive WoodWork ships to customers across Lebanon. Search crawlers access the site from distributed infrastructure. A server located in a single data center introduces geographic latency that compounds across audit scores and user experience.

The Edge rendering strategy covered:

Edge Functions for request-level personalization (geo-adaptive content, currency, language hints)

HTML caching at CDN layer for static and ISR pages

Reduced DNS + TCP handshake overhead by serving responses from the nearest edge node

The measurable outcome: consistent sub-200ms TTFB regardless of origin request geography.

7. AI SEO and Intent Mapping — Beyond the Keyword List

The shop's category architecture was not built around products. It was built around queries.

The Intent Mapping Standard: Every category page owns a dominant transactional query cluster. Every informational page supports commercial queries through contextual internal linking.

Keyword stuffing and flat category trees represent the previous generation of ecommerce SEO. For Revive WoodWork, the AI SEO layer powered:

Query clustering models grouping semantically related searches (e.g., "live edge table Lebanon," "custom wood table Beirut," "handcrafted furniture Tripoli")

SERP gap analysis identifying informational content opportunities that feed transactional pages

Search intent classification separating transactional, informational, and commercial investigation queries

Product-to-intent mapping ensuring each product page's copy matched the dominant query intent for that product type

The result is that the Lebanese Woodwork guide serves informational intent while feeding internal linking authority to product category pages. The URL architecture reflects intent hierarchy, not product database structure.

8. Pillar Pages as Authority Anchors

Two pages that do the structural work most ecommerce sites skip entirely.

Intent mapping without content infrastructure is just theory. For Revive WoodWork, two pillar pages were built to anchor the topical authority of the entire site:

Objective: Own the informational intent layer around wood works in Lebanon.

This page targets the full informational cluster around Lebanese woodworking — types of wood, workshop process, heritage craft context, regional suppliers. It is not a category page. It does not sell. It educates — and through contextual internal links, it passes authority to every transactional category page it references.

The page answers queries like: "What wood works are made in Lebanon?" / "What wood is used in Lebanese furniture?" / "Where to buy wood works in Lebanon?"

FAQ schema is embedded directly on this page, targeting long-tail question clusters that appear in Google's People Also Ask and AI Overviews.

Objective: Establish the Organization entity and E-E-A-T signals.

This is not a decorative page. It is the entity anchor for the entire site. The About page establishes:

The human identity behind the brand (craftsperson, Lebanese origin, Tripoli workshop)

The brand's founding story and sustainability stance

The Organization entity that structured data references across every other page

AI crawlers use About pages to resolve brand entity ambiguity. When a brand's About page clearly establishes who, where, and what, every other page on the site benefits from that entity resolution.

The Pillar Page Standard: Informational pillar pages do not compete with product pages. They feed them. The authority they accumulate flows downstream through internal links — and upstream through entity resolution.

9. Authority Engineering — The Structural Alternative to Link Acquisition

You can build topical authority without waiting for backlinks.

Authority Engineering is the deliberate construction of semantic authority through structure — not the passive accumulation of external links.

For this shop, authority was built through:

Topical map architecture — content covers the full entity space around Lebanese woodwork (types of wood, workshop process, product categories, custom orders)

Semantic siloing — internal links reinforce topical clusters; cross-silo links are used deliberately, not randomly

NLP-based internal link sculpting — anchor text selection driven by entity co-occurrence modeling

Layered structured data — Product, Review, FAQ, BreadcrumbList, and Organization schemas interlinked to form a structured entity graph

The Authority Engineering Distinction: Link acquisition depends on external actors. Authority Engineering depends only on your own architecture decisions.

For a Lebanese SME competing with larger regional retailers, this distinction matters. You control your internal architecture entirely.

The Two-Layer Schema Implementation

Static site-wide identity + dynamic per-product data — generated at the right rendering stage.

Structured data on Revive WoodWork is not a single JSON-LD block added to the homepage. It is a two-layer system where each layer is generated at the appropriate rendering stage.

Layer 1 — Static Global Schema (Organization · WebSite · FurnitureStore)

Generated at build time. Present on every page. Establishes the entity graph.

The static schema layer runs as a @graph object containing three interconnected types:

Organization — Defines Revive WoodWork as a named entity with @id, logo, founding date, contact point, and sameAs links to Instagram and Facebook. This is the entity anchor that all other schema types reference.

WebSite — Links the site URL to the Organization entity, declares the creator as Analytics by Ghaith, and registers a SearchAction that maps the site's internal search to the schema's potentialAction — enabling sitelinks search box eligibility.

FurnitureStore — Classifies the business as a FurnitureStore (the correct schema type for a craft furniture retailer), with address Kassara Street, Deir Ammar), geo-coordinates, opening hours, and areaServed set to both Lebanon and Worldwide.

All three types share @id references, forming a connected graph — not three isolated objects.

Layer 2 — Dynamic Product Schema (Product · BreadcrumbList)

Generated per-page at Static rendering time with tag-based revalidation.

Every product page generates its own @graph containing:

Product — Includes name, description, sku, brand (references the Organization @id), manufacturer (same reference), and category. The offers block uses AggregateOffer with priceCurrency, lowPrice, highPrice, availability, and itemCondition — all values required for Google Shopping eligibility.

BreadcrumbList — A four-level path: Home → Shop → Collection → Product. Each ListItem carries both name and item URL, making the breadcrumb machine-readable for rich results.

> The Schema Architecture Principle: Static schemas establish who you are. Dynamic schemas establish what you sell. Both must reference the same entity @id — otherwise crawlers treat them as unrelated signals.

The result is a structured entity graph where every product is linked to the Organization, every Organization is linked to the WebSite, and the FurnitureStore type provides the local business classification that feeds Google Maps and local search results simultaneously.

10. Semantic Density Optimization

AI crawlers penalize both thin content and keyword stuffing. The goal is contextual completeness.

Semantic density optimization ensures that every page communicates its topical intent to AI-era crawlers without redundancy or padding.

The controls applied:

Entity coverage modeling — verifying that core entities related to the topic are present in the content

Co-occurrence scoring — ensuring semantically related terms appear in natural proximity

TF-IDF balancing — managing term frequency relative to document length

NLP compression — removing filler content that dilutes semantic signal without adding user value

The measurable outcomes for Revive WoodWork:

No thin product descriptions relying on manufacturer copy

No keyword repetition across category page headers

High contextual clarity on every product type

Improved crawl efficiency — fewer pages consuming crawl budget without contributing authority

11. JavaScript Budget Enforcement

Performance budgets belong in CI/CD, not in post-launch audits.

Every JavaScript byte shipped to the browser is a liability against Performance and INP scores. The project enforced:

Bundle analyzer gates in the deployment pipeline — a build fails if the bundle size exceeds policy

< 90KB initial JavaScript target — all above-the-fold rendering completes within this budget

Dynamic imports for interaction-based loading — cart, wishlist, and filter UI load only when triggered

Third-party isolation via worker-based execution — analytics and tracking scripts run in Web Workers, off the main thread

No unused JavaScript reached production. This is a policy decision enforced automatically — not a manual cleanup exercise.

12. Accessibility Engineering — The Score That Validates Everything Else

A 96+ Accessibility score is not a compliance checkbox. It is an architectural quality signal.

Accessibility was implemented at component level, not retrofitted:

Complete ARIA labeling — every interactive element has a descriptive label for screen readers and AI parsers

Logical heading hierarchy — H1 → H2 → H3 structure is consistent across all page templates

Verified contrast ratios — all text/background combinations pass WCAG AA at minimum

Full keyboard navigation — the shop is fully operable without a mouse

The accessibility score in Lighthouse is a proxy for structural quality. When heading hierarchies are logical and ARIA labels are complete, AI search engines process the content structure more reliably — which feeds directly into featured snippet eligibility and AI Overview inclusion.

13. Automated Performance Governance

Optimization is not an event. It is a continuous system.

The project integrated:

Lighthouse CI in the deployment pipeline — every pull request runs a full Lighthouse audit

Real User Monitoring (RUM) — field data from actual user sessions captures CWV in production conditions

Core Web Vitals alerts — deviations from established baselines trigger immediate notifications

Merge blocking — a PR that degrades Performance, Accessibility, Best Practices, or SEO scores below threshold does not merge

This moved performance governance from "quarterly SEO audit" to "continuous deployment gate." The scores are maintained, not occasionally restored.

14. The AI SEO Automation Layer

The system improves itself.

Beyond the static architecture, an AI automation layer runs on top of the content management system:

Meta title + description optimization via intent classifier — titles are scored against dominant query intent before publishing

Dynamic canonical logic — canonical tags are computed based on product variant hierarchy, not manually assigned

Internal linking suggestions — new content is analyzed for internal link opportunities against the existing topical map

Crawl budget prioritization — high-value pages are signaled for priority crawling; thin or duplicate pages are deprioritized

Content gap detection — the system flags entity coverage gaps in existing content against SERP competitor analysis

This creates a self-improving SEO ecosystem. Each new piece of content strengthens the topical authority of the existing structure.

15. Measurable Outcomes — Desktop Lighthouse Scores

The numbers behind the architecture.

The technical decisions described above are not theoretical. The following scores are from a desktop Lighthouse audit run against the live production site at [revivelb.site](https://revivelb.site).

Performance — 98

Near-instant loading. Users see content within 0.3 seconds, with the largest visible elements rendered in under 0.6 seconds.

Accessibility — 91

Complete ARIA labeling, logical heading hierarchy, verified contrast ratios, and full keyboard navigation — embedded at component level.

Best Practices — 100

No deprecated APIs, no mixed content, no insecure requests. Full marks confirm the codebase meets modern browser and security standards.

SEO — 100

Every signal is in place: canonical tags, structured data, meta descriptions, crawlable link structure, and mobile-friendly rendering.

Core Web Vitals

First Contentful Paint (FCP) — 0.3 s

Largest Contentful Paint (LCP) — 0.6 s

Total Blocking Time (TBT) — 100 ms

Cumulative Layout Shift (CLS) — 0

Speed Index (SI) — 1.3 s

The Outcome Standard: A CLS of 0, an LCP under 1 second, and full marks on SEO and Best Practices are not the result of post-launch tweaks. They are the direct output of an architecture where every decision had a performance and crawlability rationale from the first commit.

These results demonstrate that high-performance ecommerce is achievable for a small Lebanese brand when architectural decisions are intentional from day one — not optimized post-launch.

The GAITH Framework (Internal Architecture Model)

What made this architecture repeatable.

The system described above does not exist as a collection of isolated techniques. It is a layered framework applied consistently:

Geographic Targeting — Lebanese market, Tripoli identity, Lebanon-wide shipping intent

Authority Engineering — Structural topical authority independent of backlink velocity

Intent Mapping — Every URL and every piece of copy anchored to a classified query cluster

Technical Hygiene — Core Web Vitals, structured data, and JavaScript budget enforced at CI level

Humanization — Copy that reads as a craftsperson talking to a buyer — not SEO filler

The 96+ Lighthouse score is the measurable output of that framework applied to a real Lebanese ecommerce brand.

Get Your Free Analytics by Ghaith Starter Plan

Enterprise-level visibility, free for life when you build with us.

Ready to build your first SEO-first website with enterprise-level performance from day one? When you launch your site with Dynamic ORD or through ghayth-abdallah.com, you will receive the Analytics by Ghaith Starter Plan — completely free for life.

The Starter Plan includes a full analytics dashboard built specifically for AI-era search. No stitching together tools. No switching between tabs. Everything in one place:

What's Included

Full GA4 + Google Search Console integration — unified data, single dashboard, zero tool-jumping

AI-powered summaries — your traffic and search data summarized automatically, with actionable insights surfaced without manual analysis

Core Web Vitals tracking — per-page performance monitoring, so you know exactly which pages are winning or losing on speed

SEO health monitoring — crawl issues, indexing gaps, and structured data errors flagged across your entire site

Web Master Tools — full control over how search engines see and access your content

Intent-driven insights — content recommendations based on how AI-era search engines classify your pages and queries

Export and filtering — slice your data by page, channel, date range, or query type and export it in one click

The Starter Plan Principle: You should not need an enterprise budget to see enterprise-level data about your own website.

Every site we build is designed to perform from day one — and the Analytics by Ghaith Starter Plan ensures you have the visibility to prove it.

Frequently Asked Questions

What is AI-First Development in web development?

AI-First Development means building with search intent and Core Web Vitals baked into every architectural decision — not patched on after launch. Rendering strategy, semantic structure, and infrastructure are all aligned to how AI search engines and LLMs process content.

How did you achieve 96+ across all Lighthouse categories?

By combining React Server Components, Partial Prerendering, strict JavaScript budget enforcement, ARIA-complete accessibility, and an AI-driven intent mapping layer — all governed by Lighthouse CI in the deployment pipeline.

What rendering strategy works best for ecommerce SEO?

A hybrid approach: ISR + Edge Caching for category pages, Static + Tag Revalidation for product pages, and Edge SSR for search results. Each page type has a different stability vs. personalization requirement.

How does semantic density affect AI SEO?

AI crawlers penalize both thin content and keyword stuffing. Semantic density optimization ensures contextual completeness without redundancy, improving both ranking signals and crawl efficiency.

Can a small ecommerce brand in Lebanon realistically achieve enterprise-level SEO scores?

Yes. The architecture is framework-driven, not budget-driven. The same Next.js App Router patterns applied to Revive WoodWork are available to any team willing to enforce deliberate standards from day one.

Found this valuable?

Let me know—drop your name and a quick message.

Written by

Ghaith Abdullah

AI SEO Expert and Search Intelligence Authority in the Middle East. Creator of the GAITH Framework™ and founder of Analytics by Ghaith. Specializing in AI-driven search optimization, Answer Engine Optimization, and entity-based SEO strategies.